We present a single-image 3D face synthesis technique that can handle challenging facial expressions while recovering fine geometric details. Our technique employs expression analysis for proxy face geometry generation and combines supervised and unsupervised learning for facial detail synthesis. On proxy generation, we conduct emotion prediction to determine a new expression-informed proxy. On detail synthesis, we present a Deep Facial Detail Net (DFDN) based on Conditional Generative Adversarial Net (CGAN) that employs both geometry and appearance loss functions. For geometry, we capture 366 high-quality 3D scans from 122 different subjects under 3 facial expressions. For appearance, we use additional 163K in-the-wild face images and apply image-based rendering to accommodate lighting variations. Comprehensive experiments demonstrate that our framework can produce high-quality 3D faces with realistic details under challenging facial expressions.

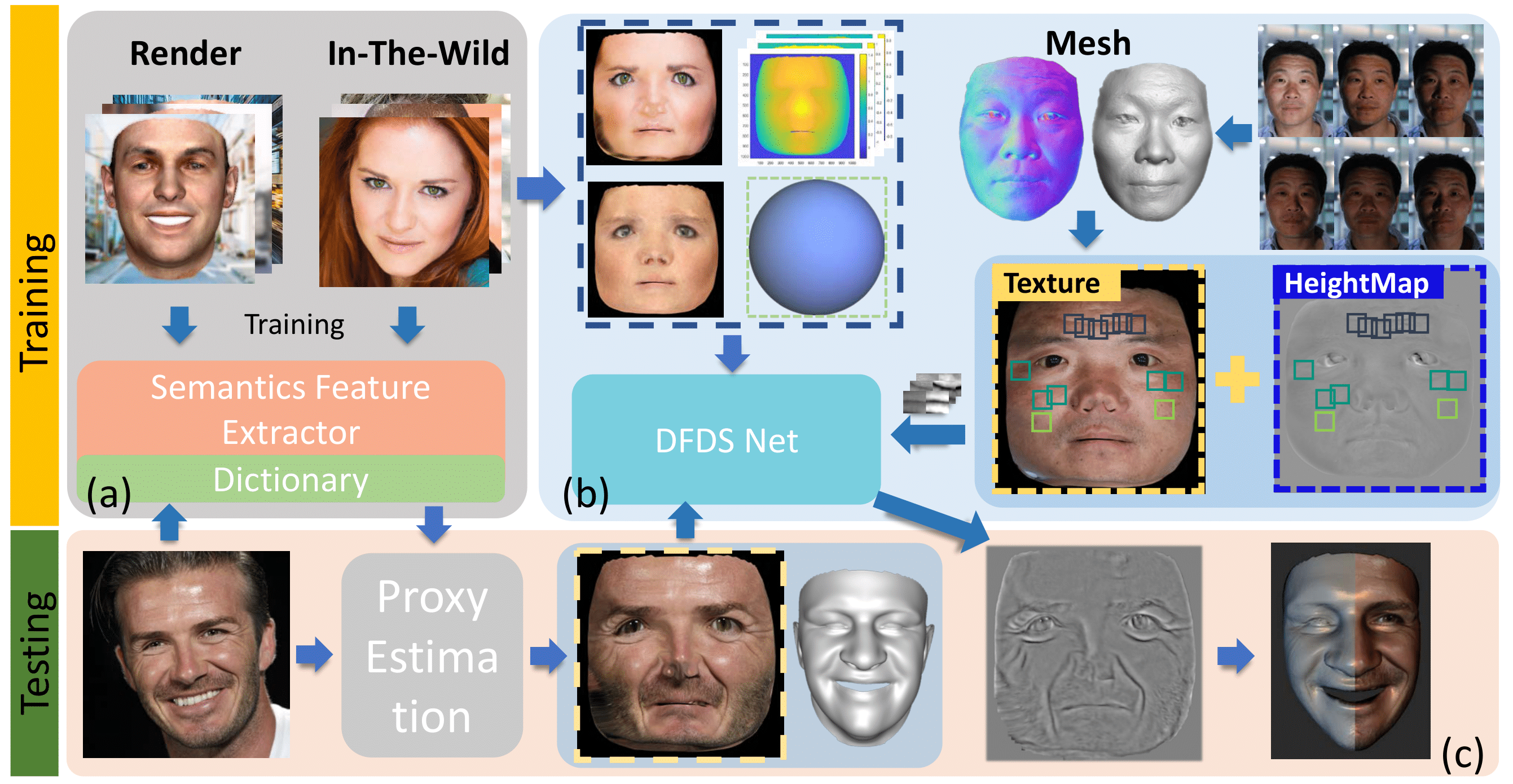

Overview

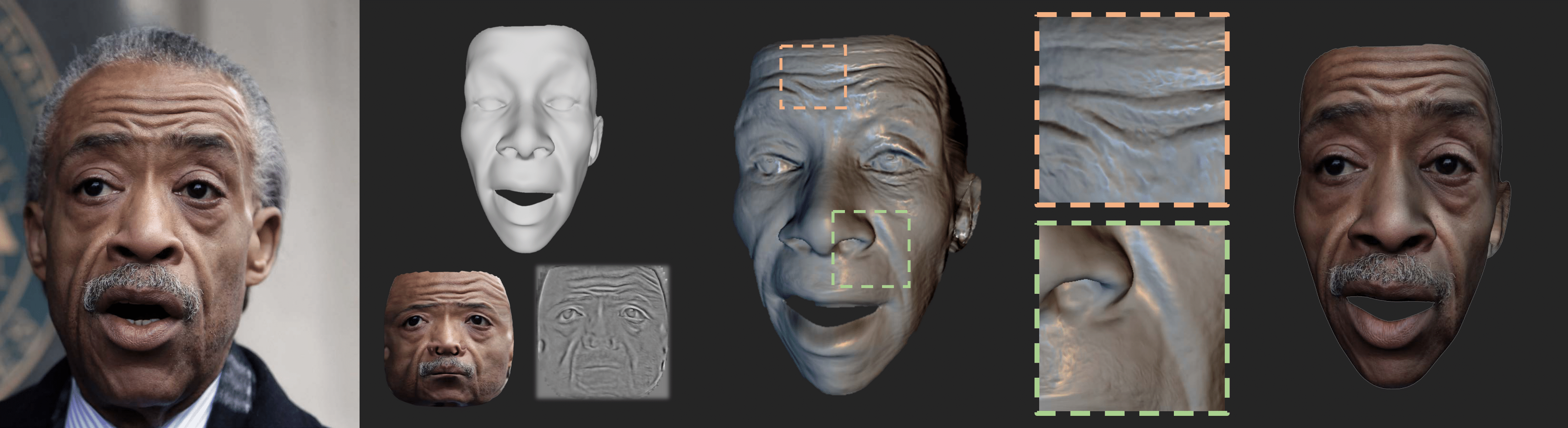

From left to right: input face image; proxy 3D face, texture and displacement map produced by our framework; detailed face geometry with estimated displacement map applied on the proxy 3D face; and re-rendered facial image.

Pipeline

Our processing pipeline. Top: training stage for (a) emotion-driven proxy generation and (b) facial detail synthesis. Bottom:testing stage for an input image.