Recently, GANs have been widely used for portrait image generation. However, in the latent space learned by GANs, different attributes, such as pose, shape, and texture style, are generally entangled, making the explicit control of specific attributes difficult. To address this issue, we propose a SofGAN image generator to decouple the latent space of portraits into two subspaces: a geometry space and a texture space. The latent codes sampled from the two subspaces are fed to two network branches separately, one to generate the 3D geometry of portraits with canonical pose, and the other to generate textures. The aligned 3D geometries also come with semantic part segmentation, encoded as a semantic occupancy field (SOF). The SOF allows the rendering of consistent 2D semantic segmentation maps at arbitrary views, which are then fused with the generated texture maps and stylized to a portrait photo using our semantic instance-wise (SIW) module. Through extensive experiments, we show that our system can generate high quality portrait images with independently controllable geometry and texture attributes. The method also generalizes well in various applications such as appearance-consistent facial animation and dynamic styling.

Overview

First row: our decoupled representation allows explicit control over pose, shape and texture styles. Starting from the source image, we explicitly change it's head pose (2nd image), facial/hair contour (3rd image) and texture styles. Second row: interactive image generation from incomplete segmaps. We allow users to gradually add parts to the segmap and generate colorful images on-the-fly.

Applications

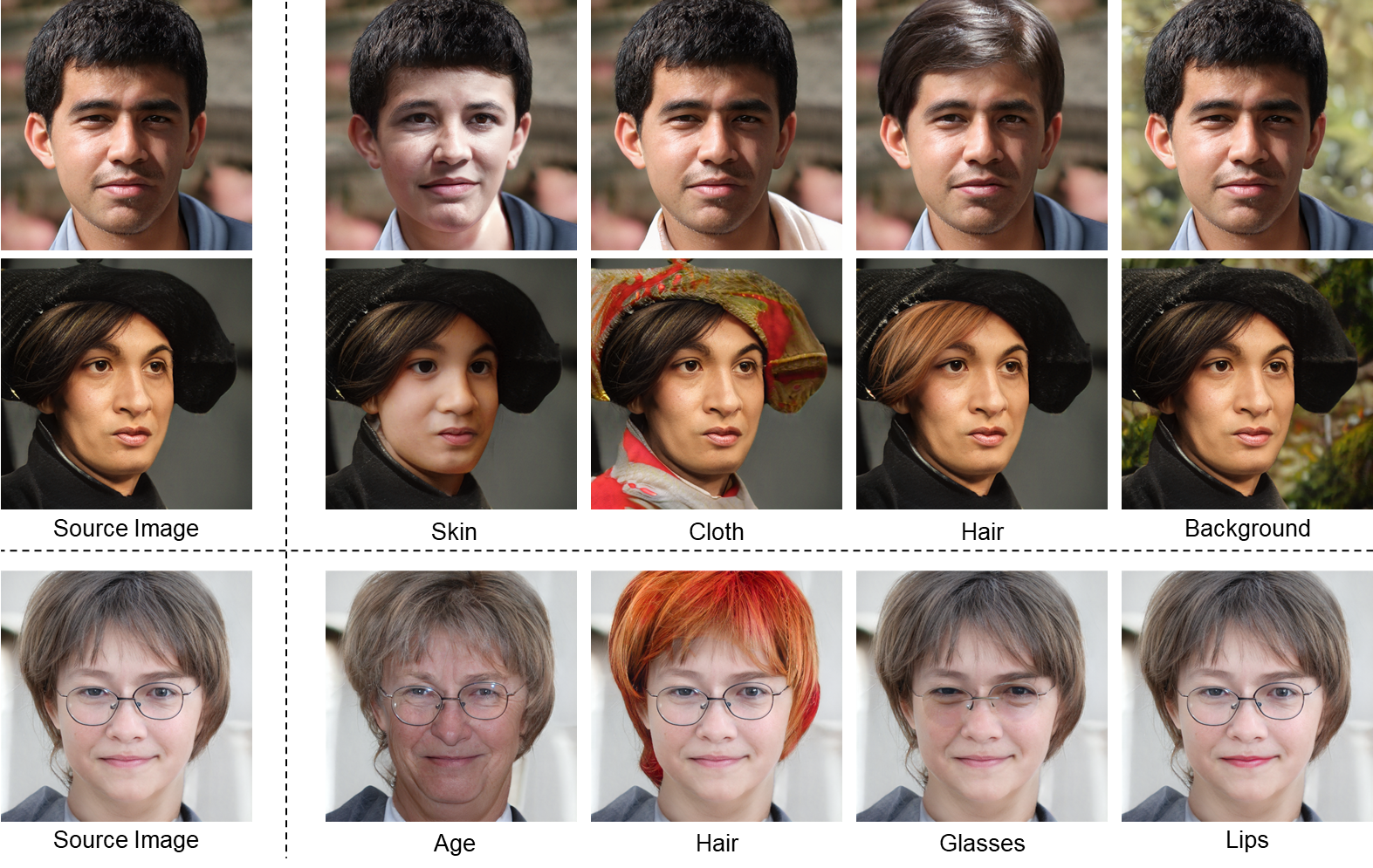

The first two videos demonstrate the regional style adjustment conditing on specify semantic segmentation map. One of the key features of our SIW-StyleGAN is semantic-level style controlling. Benefiting from the StyleConv blocks and style mixing training strategy, we could separately control the style for each semantic region.

Video 1: Regional style adjustment

Video 2: Style transfer

Under our generation framework, we can generate free-viewpoint portrait images from geometric samples or real images by changing the camera pose. our SOF is trained with multi-view semantic segmentation maps, the geometric projection constraint between views is encoded in the SOF, which enables our method to keep shape and expression consistent when changing the viewpoint.

Video 3a: Free view point rendering and shape morphing

Video 3b: Free view point rendering and shape morphing

we collect a video clip from Internet and generate segmentation maps for each frame with a pre-trained face parser. Our method can preserve texture style and shape consistency on various poses and expressions without any temporal regularization.

Video 4: Animated video sequences

Our method allow users to gradually add parts to the segmap and generate colorful images on-the-fly.

Video 5a: Generation from drawing

Acknowledgments

We thank Xinwei Li and Qiuyue Wang for dubbing the video, Zhixin Piao for comments and discussions, and Kim Seonghyeon and Adam Geitgey for sharing their StyleGAN2 implementation and face recognition code for our comparisons and quantity evaluation.